Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Original version Of This is the story Present How many magazinesThe

Big language models work well because they are so big. The latest models of OpenAI, Meta and DEPSEC use several hundred billion “parameters” – compatible nakabs that determine the connection between the data and tweet during the training process. With more parameters, models are better able to detect patterns and connections, which results in more powerful and accurate.

However, this power comes at a cost. Training a model with several hundred billion parameters receives huge calculating resources. For example, its Jemi 1.0 to train the Ultra model, spent on Google Report $ 191 millionThe Greater language models (LLMs) require a sufficient calculation power when answering a request every time, which makes their infamous energy hog. A single query in the chatter Is spending about 10 times As much energy as a single Google search according to the Electrical Power Research Institute.

In response, some researchers are now thinking of small. IBM, Google, Microsoft and Open are recently published by the small language model (SLM), which uses a few billion parameters – a part of their LLM parts.

Small models are not used as a general-informed equipment like their greater cousin. However, they can gain skills in specific, more narrow defined work, such as conversation summaries, patients to answer as healthcare chatboats of patients and collect data on smart devices. “For lots of work, an 8 billion – the parameter model is actually pretty good,” says Zico colterCarnegie Mellon University is a computer scientist at the University. They can also run a laptop or cell phone instead of a huge data center. (There is no Sens about the correct definition of “small” but new models are about 10 billion parameters about 10 billion)))))

Researchers use several techniques to optimize the training process of these small models. Large models often scrap the raw training data from the Internet, and this data can be chaotic, messy and processed hard to process. However, these large models can then create a high-quality data set that can be used to train a small model. As a result of the method of knowledge, the larger model is effectively passed its training, as a teacher is like giving a lesson to a student. “Cause [SLMs] Get so well with this small model and such little data that they use high quality data instead of messy staff, “said Colter.

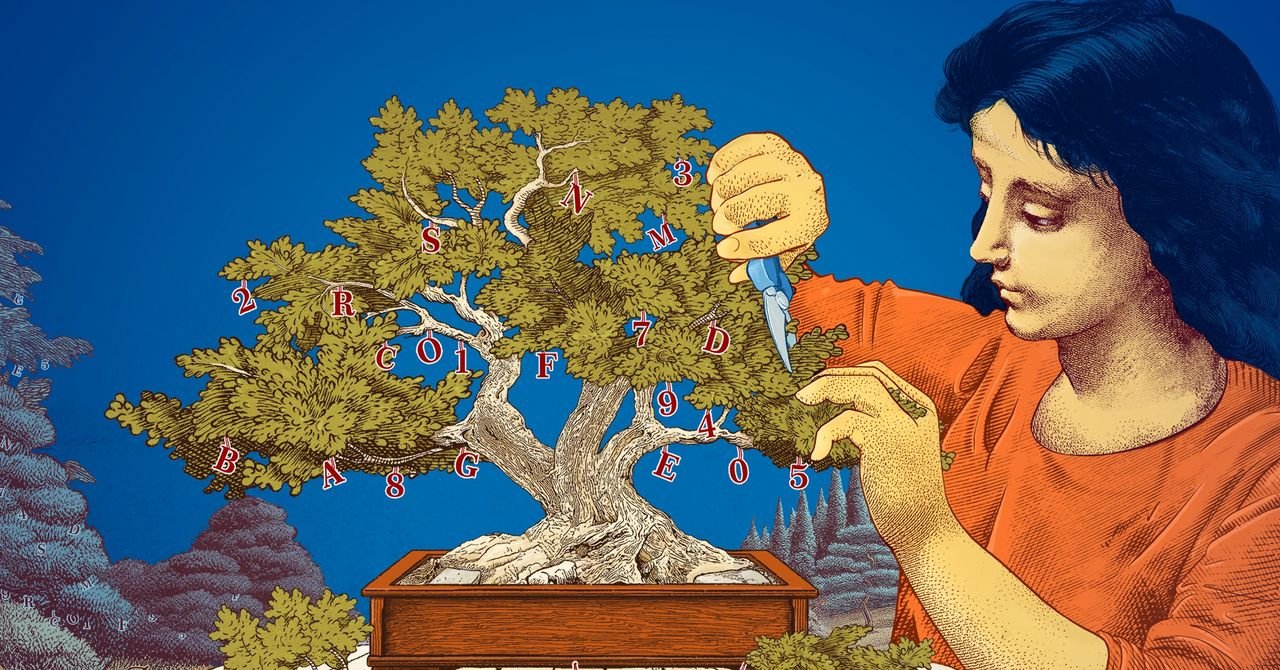

Researchers also started with the big ones and trimmed them by trimming small models. A method, known as the trim, is forced to remove the unnecessary or inefficient parts of A Neural networkALD is a broad web of attached data points that imply a large model.

Priming was inspired by a real -life neural network, The Human Brain, which is a person’s age as a person’s age to soften the connection between the synapse. The methods of today’s trim come back A 1989 paper Where computer scientist Ian Lacun, now met, argued that up to 90 percent of the trained neural network can be removed without leaving the skills. He called the method “the best damage to the brain”. Priming researchers can help a small language model to be subtle for a particular task or environment.

For researchers interested in how language models work them, small models provide an expensive way to test the ideas of the novel. And since they have less parameters than the big model, their argument can be more transparent. “If you want to create a new model you need to try things,” said LeshemMIT-IBM Watson is a research scientist at the AI Lab. “Small models allow researchers to test with low spots.”

Large, expensive models, with their growing parameters, will be effective in general chatbots, image generators and applications DrugThe However, for many users, a small, target model will work exactly when it is easy to train and build researchers. “These skilled models can save money, time and count,” said Choshen.

Real story Re -printed with permission How many magazines, An editorially independent publishing Simon’s Foundation Whose aim is to increase the public understanding of the science of mathematics and the development of the research of physical and life science and covering the trends.