Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

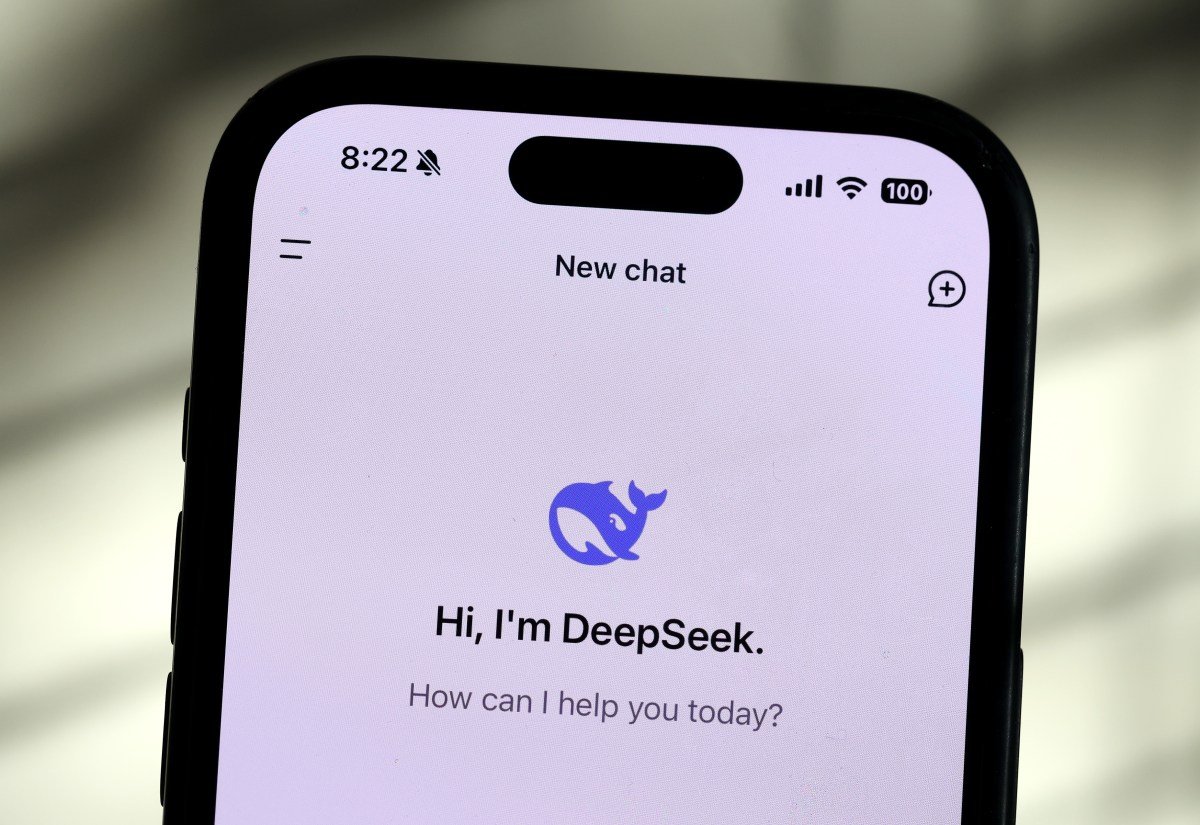

Deputy Updated R1 argument AI Model This week the AI community may get most attention. However, the Chinese AI Lab has published a small, “pathetic” version of its new R1, DIPSEC-R 1-0528-QN 3-8B, who claims that DEPSEC has claimed that the comparative-sized models have beaten certain criteria.

Small updated R1, which was built using Qwen3-8b model Alibaba was launched as the Foundation in May, performs better than Google Gemini 2.5 flash At AIM 2025, a collection of challenging math questions.

DIPSEK-R 1-0528-CUYN 3-8B also matched almost recently published by Microsoft PHI 4 argument plus Another math skills test model, HMMT.

So-called distilled models such as DIPSEC-R 1-0528-CUN 3-8B are generally less capable of their full size parts. On the Plus Side, they are claiming less countingly. Accordingly In the cloud platform nodsift, a GPU with 40GB-80GB RAM is required to run QWen3-8B (eg, an Nvidia H111). Full -size new R1 needed About a dozen 80GB GPUThe

Updated by DIPSEC AT TAKED RULDED BY 1 and it trained DEPSEC-R 1-0528-QUN 3-8B using QWen3-8B delicate. On a dedicated web page for the AI Dev platform to hug the platform, DEPSEC-R describes 1-0528-QUN 3-8B “For both rational models and art development for academic research and small-scale models.”

DIPSEC-R 1-0528-CUN 3-8B is available under a permit MIT license, which means it can be used commercially without ban. Several hosts including LM StudioProvide the model through an API already.