Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

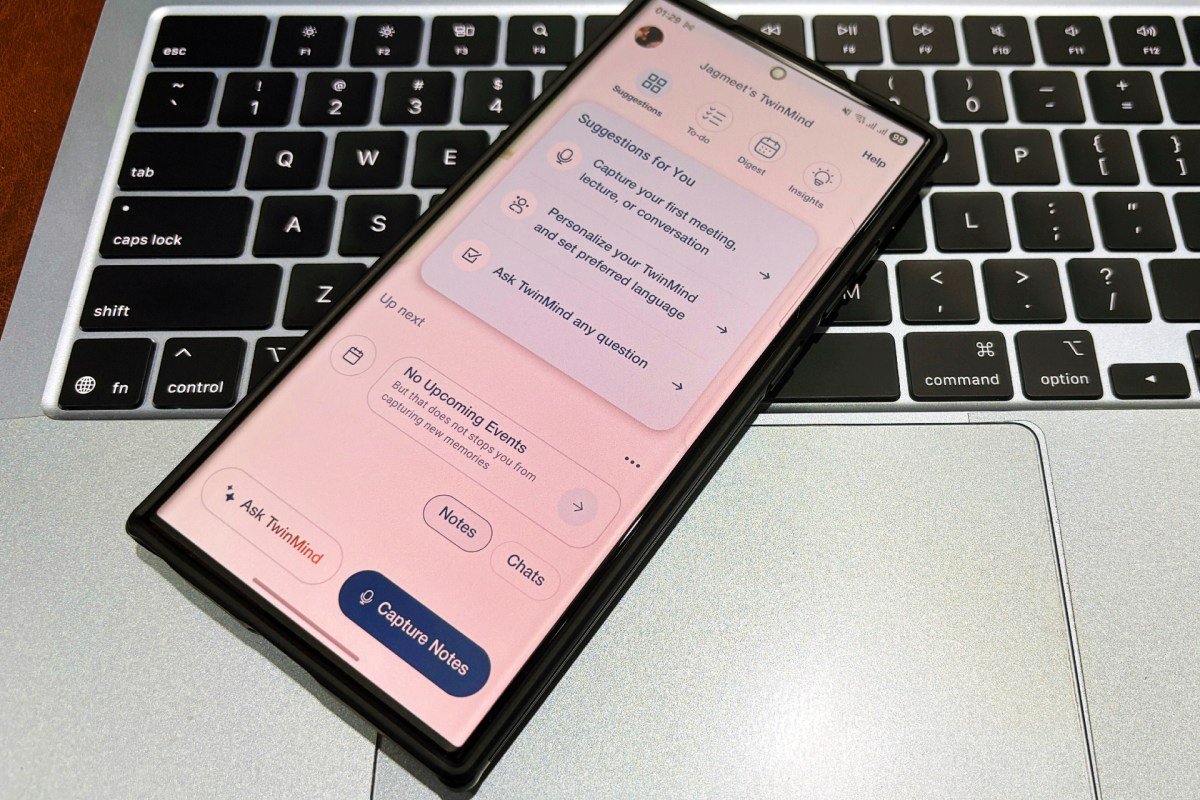

Three former Google X scientists aim to give you a second brain-Science-Fi or Chip-in-your head is not in the senses-but through an AI-driven application that you get the context of what you are saying in the background. Their start, TwinmindThe seeds have collected $ 5.7 million and released an Android version with a new AI speech model. It also has an iPhone version.

Daniel George (CEO) and his former Google X colleague Sunny Tang and Mahi Karim (both CTO), carry on the background of Twinmind, capturing the surrounding speech (including user permission) to create a graph of personal knowledge.

By turning spoken thoughts, meetings, speeches and conversations into a structural memory, the application can create AI-driven notes, two-dos and answers. It works offline, the audio in real-time processes to uncover the audio on-transcript and can capture the audio in 16-17 hours without leaving the device battery, founders say. The application can back up the user’s data so that the device is lost, the conversation can be restored, though users can choose from it. It supports real-time translation in more than 100 languages.

Twinmind captures audio throughout the background all day to distinguish itself from the AI meeting like Ote, Grunola and Firefly. To make this possible, the team has created a low-level service in authentic Swift which runs locally on the iPhone. In contrast, many contestants use the native and rely on cloud-based processing, which is limited to the background for extended periods, George said in exclusive interviews.

“We spent about six to seven months last year just perfect this audio capture and arrived there to find a lot of hack around the wall of Apple’s wall,” he told TechCrunch.

George left Google X in 2021 and got the idea for Twinmind in 2021 when JP was working as Vice President in Morgan and appealed to the AI lead, participated in the back-to-back meeting every day. To save time, he created a script that contains the audio, replicated its iPad and it was fed to the Chatzipi – which began to understand its projects and even began to create usable codes. Fascinated by the results, he shared it with friends and posted about it in the Blind, where others showed interest but nothing was going on on their work laptops. It managed to create an app that could run on a private phone, listening quietly to collect the useful context during meetings.

In addition Mobile appTwinmind offers a Chrome Extension It collects the additional context through the browser’s activity. Using Vision AI, it can scan the open tabs from various platforms, including email, slack and concept, and explain content.

TechCrunch event

San Francisco

|

October 27-29, 2025

Even the startup used the extension itself to shortlist interns from more than 850 applications received this summer.

“We opened all the LinkedIn profiles and CVs of 854 applicants in the browser tabs, then in the browser tabs, then asked the Chrome extension to rank the best candidates.” “It’s done a great job – that’s how we appointed our final four inns.”

He mentioned that current AI chattabs, including OpenAE Chatzipt and ethnographic clode, can easily process the parse sign-ups to collect context information from hundreds of documents or equipment such as LinkedIn or Gmail. Similarly, AI-driven browsers such as confusion and browser organizations lack your offline conversations and personal meetings.

Startup currently has more than 30,000 users, of which about 15,000 are active every month. George says about 20-30% of the twin users use Chrome extension.

Although the largest base in the United States so far, the startup is also visible from India, Brazil, the Philippines, Ethiopia, Kenya and Europe.

Twinmind targets general audience, though 50-60% of its users are currently professional, about 25% of students and the remaining 20-25% of individuals use it for personal purposes.

George told TechCrunch that his father was among those who were using Twinmind to write their autobiography.

One of the significant errors of AI is the possibility of compromising the user’s privacy. George, however, said that Twinmind did not train its models on the user’s data and designed to work without sending the cloud recording. Unlike many other AI notes taking applications, Twinmind users do not allow access to audio recording after the audio is deleted on the fly — when only the transcript text is stored locally in the app, he mentioned.

Twinmind co-founder has spent several years working on various projects on Google X. George told TechCrunch that he worked on six projects alone, And -AI-driven yarbods behind the team, which Recently made the title to sue OpenAI and Johnny IvyThe This experience helped the Twinmind team to move quickly to the product from the idea.

George said “Google X was actually a place to prepare for your own company.” “Projects like about 30 to 40 startups at any time are happening. Someone else can work in six early stages for more than two or three years before they turn on their own — not at all at such a short time.”

Before joining Google, the gravitational waves worked on the application of deep education in the Astrophysics as part of the George Nobel Prize – as part of the Ligo Group for Super Computering applications for the National Center of Illinois University of Illinois. He was in just one year – 24 years old – a reputation that he led to a deep education and an AI researcher who led him to join the Stephen Wolfrum research lab in 2017, completing his PhD for astronomy only in one year.

That initial connection with the Wolfram came a few years later-he wrote the first check for Twinmind, identified in a startup for the first time. With the participation of other investors, including Sikoiya Capital and Olfrm, the recent seed round was led by flowing initiatives. Round values mean Twinmind Million 60 million posts.

In addition to its apps and browser extensions, the Twinmind Twinmind Year -3 model has also launched, it is the successor of its existing ear 2, which supports more than 140 languages worldwide, and has a sound rate of 5.26%, the startup has said the startup. The new model can also recognize various speakers in the conversation and the rate of speaker diarrhea is 3.8%.

The new AI model is a subtle tune mixture of several open-source models, which are trained on a furnished set of human-non-existent internet data, including podcasts, videos and films.

George said, “We have found that the more you support the language, the better it is to understand the model accent and regional dialects because it is training in a wide range of speakers,” George said.

The model costs $ 0.23/ h and Would be available through an API To the developer and initiative over the next few weeks.

In contrast to ear -1, ear -2, the full offline does not support the experience, as it is larger in size and in the cloud. However, if the app is automatically switched to the Internet by automatically, and then when returning to the ear -3, George said.

With the introduction of ear -3, Twinmind now provides a pro subscription with $ 15/month with 2 million token and email support in 24 hours with greater context windows. Nevertheless, the free version still exists with all the features existing with unlimited transcription and device speech recognition.

The startup currently has a team of 11 members. It is planned to hire some designers to enhance its user experience and establish a business development team to sell its API. Furthermore, there are plans to spend some money to achieve new users.