Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Saturday, Triple Ganger CEO Alexander Tomchuk was alerted that his company’s e-commerce site was down. This appears to be some kind of distributed denial-of-service attack.

He soon discovered that the culprit was a bot from OpenAI that was relentlessly trying to scrape his entire, massive site.

“We have over 65,000 products, each product has a page,” Tomchuk told TechCrunch. “There are at least three pictures per page.”

OpenAI was sending “thousands” of server requests trying to download hundreds of thousands of photos with their detailed descriptions.

“OpenAI used 600 IPs to scrape data, and we’re still analyzing last week’s logs, probably more,” he said of the IP addresses the bot used to try to access his site.

“Their crawlers were crushing our site,” he said “It was basically a DDoS attack.”

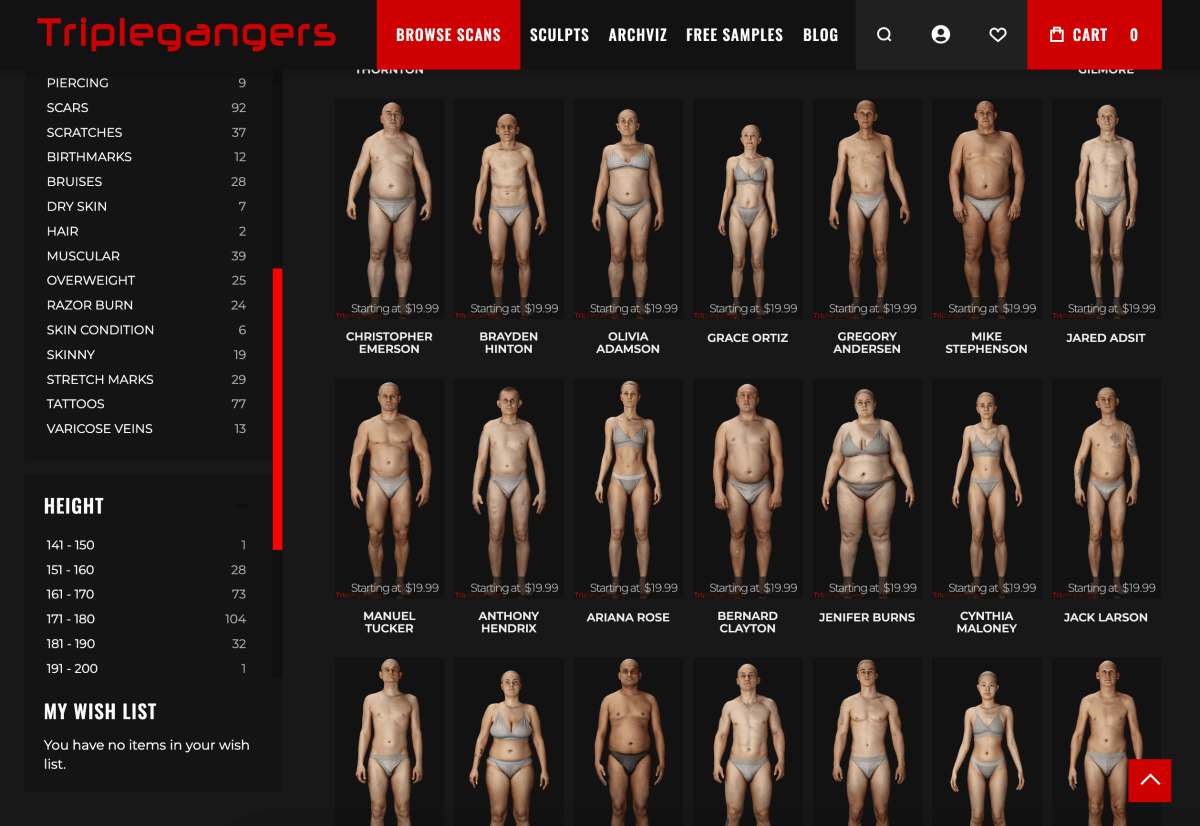

Triplegangers’ website’s business. The seven-employee company has spent more than a decade building what it calls the largest database of “human digital doubles” on the web, meaning 3D image files scanned from real human models.

It sells 3D object files as well as photos — from hands to hair, skin and whole bodies — to 3D artists, video game makers, and anyone looking to digitally recreate authentic human features.

Tomchuk’s team, based in Ukraine but licensed in the US from Tampa, Florida, is one Terms of Service page on its site which prohibits bots from taking pictures without permission But he did nothing alone. Websites must use a properly configured robot.txt file with tags specifically telling OpenAI’s bot, GPTBot, to leave the site alone. (OpenAI also has a few other bots, chatgpt-user and oai-searchbot, which have their own tags, According to its crawler information page.)

Robot.txt, otherwise known as the Robot Exclusion Protocol, was created to tell search engines what sites not to crawl when they index the web. OpenAI says on its informational page that it honors such files when configured with its own do-not-crawl tags, though it warns that it can take up to 24 hours for its bots to detect an updated robot.txt file.

In Tomchuk’s experience, if a site doesn’t use robot.txt correctly, OpenAI and others can scrape its meaning to their heart’s content. This is not an opt-in system.

To add insult to injury, not only were the triplegangers knocked offline by OpenAI’s bot during US business hours, but Tomchuk expects a jacked-up AWS bill thanks to all the CPU and download activity from the bot.

Robot.txt is also not fail safe. AI companies voluntarily comply. Another AI startup, Perplexity, was famously called out by a Wired investigation last summer. When some evidence was not implied confusion Respect it.

By Wednesday, a few days after OpenAI’s bot returned, Triplegangers had a properly configured robot.txt file in place, and also had a Cloudflare account set up to block his GPTBot, and discovered that BarkRoller (an SEO crawler) and Bytespider (an SEO crawler) and TokTok’s crawler). Tomchuk is also hopeful that he has blocked crawlers from other AI model companies. As of Thursday morning, the site had not crashed, he said.

But Tomchuk still has no reasonable way to find out what OpenAI successfully took, or to remove that material. He found no way to contact OpenAI and ask. OpenAI did not respond to TechCrunch’s request for comment. And OpenAI so far Its long-promised opt-out tool has failed to deliverTechCrunch recently reported.

This is a particularly complicated problem for triplegangers. “We’re in a business where rights are a serious issue, because we scan real people,” he said. With laws like Europe’s GDPR, “they can’t just take somebody’s picture on the web and use it.”

Triplegangers’ website was also a particularly tasty find for AI crawlers. Multibillion-dollar-valued startups, such as Scale AICreated where humans painstakingly tag images to train AI. Triplegangers’ site has photos tagged in detail: race, age, tattoos vs. scars, all body types, and more.

The irony is that it was the lure of the OpenAI bot that alerted the triplegangers to just how open it was. If it had scraped more gently, Tomchuk would never have known, he said.

“It’s scary because these companies seem to have a loophole to crawl the data,” says Tomchuk, “if you update your robot.txt with our tags you can opt out,” but it puts the onus on the business owner to block them. to understand.

He wants other small online businesses to know that the only way to discover if an AI bot is taking a website’s copyrighted material is to actively look. He is certainly not alone in being intimidated by them. This is what other website owners have said recently Business Insider How the OpenAI bot crashed their site and raised their AWS bill.

The problem becomes massive in 2024. New research from digital advertising company DoubleVerify Found that the AI crawler And scrapers will increase “general illegal traffic” by 86% in 2024 — that is, traffic that doesn’t come from genuine users.

Still, “most sites remain unaware that they were scraped by these bots,” warns Tomchuk. “Now we have to monitor log activity every day to see these bots.”

When you think about it, the whole model works a bit like a mafia shakedown: AI bots will take what they want if you don’t have protection.

“They should be asking for permission, not just scraping data,” Tomchuk said.

TechCrunch has an AI-focused newsletter! Sign up here Get it in your inbox every Wednesday.