Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Stanford University researchers paid 1,052 people $60 to read the first two lines The Great Gatsby in an app. It’s done, an AI that looks like a 2D sprite from a SNES-era Final Fantasy game, asking participants to tell their life stories. The scientists took those interviews and created an AI that they say replicates the participants’ behavior with 85% accuracy.

Research, title 1,000 generative agent simulationsA joint venture between scientists working for Stanford and Google’s DeepMind AI research lab The pitch is that building AI agents based on random humans can help policymakers and businesspeople better understand the public. Why use focus groups or poll the public when you can talk to them once, create an LLM based on that conversation, and then keep their thoughts and opinions forever? Or, at least, able to recreate a rough approximation of those thoughts and feelings as an LL.M.

“This work provides a foundation for new tools that can aid in the investigation of individual and collective behavior,” the paper’s abstract says.

“For example, how might different people respond to new public health policies and messages, respond to product launches, or respond to major shocks?” The paper continues. “When simulated individuals are assembled into cohorts, these simulations can help pilot interventions, develop complex theories to capture subtle causal and contextual interactions, and expand our understanding of structures such as institutions and networks across domains such as economics, sociology, organization, and political science.”

All of these prospects are fed into an LLM based on a two-hour interview that mostly answers the same questions as their real-life counterparts.

Most of the process was automated. The researchers contracted Bovitz, a market research firm, to collect participants. The goal was to get a broad sample of the US population, as broad as possible while being limited to 1,000 people. To complete the study, users signed up for an account in a purpose-built interface, created a 2D sprite avatar, and began speaking with an AI interviewer.

The question and interview style is a modified version used by American Voice Project, A joint Stanford and Princeton University project interviewing people across the country.

Each interview began with the participant reading the first two lines The Great Gatsby (“In my younger and more vulnerable years my father gave me some advice that I’ve turned over in my mind ever since. ‘Whenever you want to criticize someone,’ he told me, ‘just remember that all the people in this world are the ones you’ve got. No.’) as a way to calibrate the audio

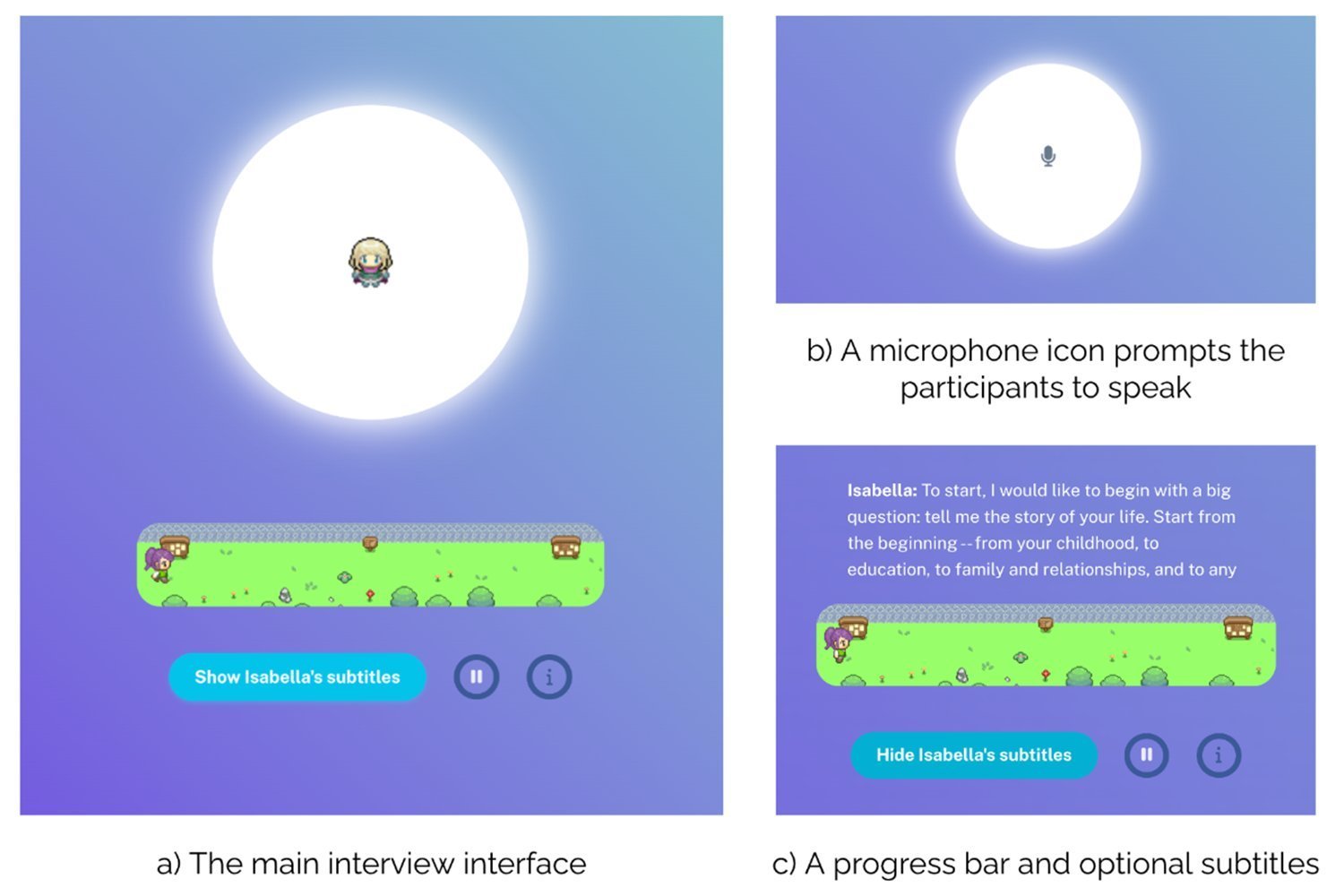

According to the paper, “The interview interface displays a 2-D sprite avatar representing the interviewer agent in the center, shown below the participant avatar, walking toward a goal post to indicate progress. When the AI interviewer agent was speaking, it was signaled by a vibrant animation of the center circle with the interviewer avatar.”

During the two-hour interviews, on average, 6,491 words in length were transcribed. It asked questions about race, gender, politics, income, social media use, their workload and their family makeup. The researchers published the interview script and the questions the AI asked.

Those transcripts, less than 10,000 words each, were then fed into another LLM that the researchers used to spin up generative agents to transcribe the participants. The researchers then put both the participants and the AI clone through more questions and economic games to show how they would compare. “When an agent is queried, the entire interview transcript is injected into the model prompt, instructing the model to simulate the person based on their interview data,” the paper said.

This part of the process was as close to control as possible. used by researchers General Social Survey (GSS) and The Big Five Personality Inventory (BFI) to examine how well LLMs match their motivations. They then ran participants and LLMs through five economic games to see how they compared.

Results were mixed. AI agents answered questions about 85% as well as real-world participants in GSS. They hit 80% on the BFI. However, the number decreases when agents start playing economic games. The researchers offered real-life participants games like cash prizes The Prisoner’s Dilemma And Dictator game.

In Prisoner’s Dilemma, participants can choose to work together and both succeed or screw over their partner for a chance to win big. In the dictator game, participants must choose how to allocate resources to other participants. Real-life subjects have earned more than the original $60 to play these.

Faced with these economic games, human AI clones didn’t even replicate their real-world counterparts. “On average, generative agents achieved a normalized correlation of 0.66,” or about 60%.

The entire document is worth reading if you’re interested in how academics are thinking about AI agents and the public. It didn’t take long for researchers to turn a human personality into an LLM that behaved similarly. Given time and energy, they could probably bring the two closer.

This is worrying for me. Not because I don’t want to see disabled human souls reduced to spreadsheets, but because I know such technology will be used for the sick. We’ve already seen stupid LLMs trained in public recording trick grandmas into giving bank information to an AI relative after a quick phone call. What if those machines had a script? What if they had access to purpose-built personas based on social media activity and other publicly available information?

When a corporation or a politician decides that the public wants and needs something based not on their stated will, but on its assumptions?