Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

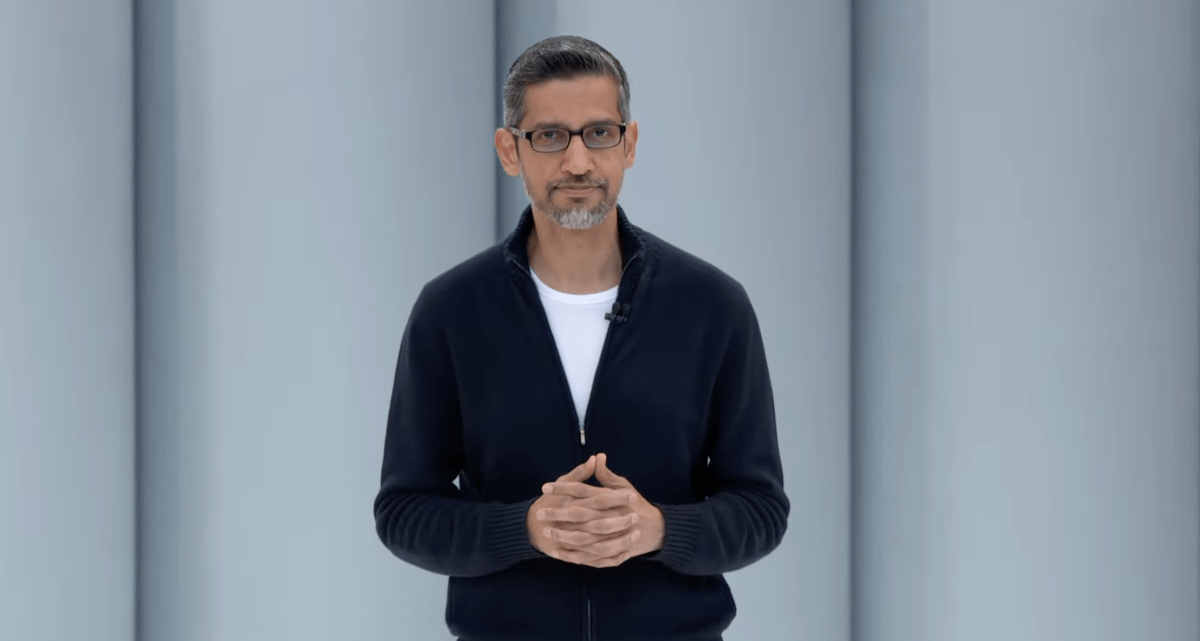

Google I/O 2025Google’s largest developer conference was held at Shoreline Amphitheater in Mountain View on Tuesday and Wednesday. We are bringing you the latest updates from the event.

I/O display product announcements across Google’s portfolio. We have received a lot of news related to Android, Chrome, Google Search, YouTube and the Google AI-powered chattabot, Gogh.

Google has hosted a separate event dedicated to Android updates: Android showThe The company has announced New ways to find lost Android phones and other items, Additional device-level features for its advanced protection program, Protection tools to protect from the scandal and theftAnd The language of a new design that says the element 3 expressesThe

All things announced on Google I/O 2025 are here.

Gemi Ultra (only in the United States) provides Google’s AI-powered applications and services “highest level access”, according to Google. It costs $ 249.99 per month And it has a strong AI capacity called Google’s VEO 3 video generator, company’s new flow video editing application and Gemi 2.5 Pro Deep Think Mode that has not yet launched.

AI Ultra comes with Google’s NotebookLM platform and upper limit ShakeCompany Figure Remixing App. AI Ultra customers also get access to Google’s Gemi Chatboat in Chrome; Some “agents” equipment powered by the organization Project mariner Technology; YouTube premium; And 30 TB storage across Google Drive, Google Photos and Gmail.

Deep thoughts a “Extended” logic mode Google’s flagship is for the Jemi 2.5 Pro model. It allows to consider multiple answers to the question before responding to the model, enhances its effectiveness in certain criteria.

Google Deep Think Works did not go into details, but it could be equal to OpenAEE and 1-Pro and upcoming and 3-Pro models, which probably use an engine to search and synthesize the best solution of a particular problem.

Deep Think is available to “trusted examiners” through API. Google has said that it is taking extra time to manage the security evaluation before making a great deal of thinking deeply.

Google claims that VEO 3 can create sound effects, background noises and even dialogues with the videos made by it. Also improves on the predecessor of VEO 3VOO 2, can generate it in terms of footage, says Google.

VEO 3 is available on Tuesday for Google’s Gemi Chatbot App for Google customers, for customers of the AI Ultra Plan every month, where it can be requested with a text or any image.

According to Google, Image 4 quickly – Faster than image 3 and it will soon be faster. In the near future, Google has planned to publish a variant of image 4 that is up to 10x faster than image 3.

According to Google, the image is capable of render “fine details” such as 4 clothes, water drops and animal wool. It can both handle photorialistic and abstract styles, making different directions proportions and images in resolution up to 2K.

Will be both VEO 3 and the image 4 Used to flow energyThe company’s AI-driven video equipment is moving towards filmmaking.

Google has announced it Gemini applications contain more than 400 monthly active usersThe

Live Gemini The ability to share the camera and screen This week will roll out to all users of iOS and Android. The Project Astra feature allows Jemini to do a periodic conversation with Jemi, as well as streams the video from their smartphone camera or screen to the AI model.

Google says that Gemi Live will start to integrate more deeply with its other applications in the coming weeks: it will soon be able to give directions from Google Maps, create events on Google Calendar and create a list of things to do with Google Task.

Google says it is updating deep research, Jemi’s AI agent that creates a thorough research report by allowing users to upload its own personal PDFs and images.

Stitch is an AI-powered equipment to help people design the web and mobile application front edges by creating essential UI elements and codes. Sewing may be requested To create UIS with a few words or even an image, it provides HTML and CSS markup for generated designs.

The stitch is somewhat limited to what it can do compared to some other vib coding products, but there is sufficient customization option.

Google has expanded access JoyIts AI agent aims to help fix buggies in the code developers. The equipment helps developers understand the complex code, create a tension request on the githab and manage specific backlog items and programming functions.

Project Mariner is the experimental AI agent of Google that browse and use websites. Google says it has The project mariner has been updated significantly to how it worksTo allow the agent to take about a dozen jobs at once and now it is turning it to the users.

For example, users of the project mariner can buy a baseball game ticket or buy a third party website online. People can only chat with Google’s AI agent and it inspects websites and take steps for them.

Low delay in Google, Multimodal AI experience, Project AstraSearch, Gemi AI application and third -party developers will give power to a new experience of products.

Project Astra was almost real-time, as a way to show multimodal AI capacity outside Google Dipmind. The company says it is now making Astra glasses with those projects with Samsung and co -partners WorldHowever, the company still does not have the set launch date.

Google is roll out of experimental AI mode Google Search Features This allows users to ask users in the United States this week to ask for complex, multiple questions through an AI interface.

AI Mode will support the use of complex data in sports and finance queries and it will provide the “try it” for clothing. Live Live Live Live This Summer will allow you to ask questions based on what is seeing your phone’s camera in real-time.

Gmail is the first app that is supported in the context of personalization.

Bead, previously called StarlineUses a combination of software and hardware with six-camera array and custom light field display so that they are in the same meeting room to conversation with someone. An AI model converts video from the camera, which is located in different corners and indicates the user, turned into a 3D rendering.

Google’s beam is proud of the “near-nest” millimeter-level head tracking and 60fps video streaming. When used with Google Meeting, beam provides an AI-powered real-time speech translation feature that saves the original speaker’s voice, tones and expressions.

And Google announced it when Google Meeting Milan is getting real-time speech translationThe

Google is turning on ChromeWhich will give people access to a new AI browsing assistant that will help them understand the context of a page quickly and complete the tasks.

Jemma 3 n is a model designed Run “easily” on phones, laptops and tabletsThe It is found in the pre -from Tuesday; According to Google it can handle audio, text, images and videos.

The company also announced a ton AI Workspace Features Gmail, Google Docs and Google are coming to VD. Most significantly, Gmail is getting a new way to create and edit a new inbox-reflection of personalized smart replies and a new inbox-clearing feature.

Video overviews are coming to the notebook LMAnd the company roll out Cynthid DetectorA verification portal that uses Google’s Synthid Watermarking Technology to help detect AI-exposed materials. Lyrea realtimeThe AI model that gives its experimental music manufacturing application strength, is now available through an API.

Wear OS 6 A cleaner app brings a unified font to the tiles for the face and pixel watches are getting dynamic themes that syncs the apps with the clock faces.

The main promise of the new design reference platform is to allow developers to create better customization on applications as well as applications as well as applications. The company is releasing A design guideline As well as for developers Figma design fileThe

Google is Make the Play Store proud for Android developers With new tools to handle subscriptions, subject pages so that users can dive in certain interests, a new checkout experience to smooth audio samples and add-ons to give people a hidden in the application content.

The “Topic Browse” pages (for us only for us) for movies and shows will connect users to applications associated with shows and movies. Also, developers are getting dedicated pages for tests and releases and tools to keep their application rollouts and tools to improve. Google user developers can now turn off the live app release if a critical problem pops up.

Subscription Management equipment is also getting an upgrade with multi-production checkout. Daves will soon be able to provide the subscription add-ons under the original subscriptions as well as a payment.

Android Studio is integrating new AI featuresA “agent AI” capacity with “Journey” that matches the release of Gemi 2.5 Pro model. And a “agent mode” will be able to handle further-developed development processes.

The Android Studio App will take new AI capacity with the “crash insight” feature on the Insights Panel. This improvement powered by Gemi will analyze the source code of an app to identify the possible causes of crashes and suggest fixes.