Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

When the cloudflare Accused AI search engine confusion Scraping websites with theft On Monday, when ignoring the specific methods of a site to block it, it was not a clear case of an AI web crawler Wild Gun.

Many people came to the defense of confusion. They argued that the website owner denying the wishes of the website to access the wonderful sites, though controversial, is acceptable. And this is a debate that will definitely grow as the AI agents flood on the Internet: should an agent be treated like a bot with a website access to his user? Or are you making the same request as a human being?

Claudflare is known for providing anti-bot crawling and other web security services on several million websites. Basically, in the examination of Claudflare, a new domain is involved in setting up a new domain that has never been crawled by a bot, setup of a robots.com that is especially the confusion of confusion blocks and then asks the content of the website. And confusion answered the question.

Cloudflare researchers have used the AI search engine “Macos used a generic browser to create Google Chrome’s disguise” when its web crawler itself was blocked. CLOWDFLAIR CEO Matthew Prince Post X -related research, “Some likely ‘AI companies work more like North Korea hackers. When doing name, shame and hard block.”

However, many do not agree with the Prince’s assessment that it was a real bad behavior. Those who are protecting the confusion on the site Like x And Hacker news Mentioned that the CloudFlair what the document seemed to be AI was when AI accessed a particular public website when its user asked about that specified website.

A person turned on Hacker news Written, “Why is the LLM accessed by the website on my behalf, why would my Firefox web browser be in a separate legal category?”

A confusion Before TechCrunch denied that the bots were from the company and the blog post of Cloudflair is called the sales pitch for Cloudflare. Then on Tuesday, the confusion Reveal a blog In defense (and usually attacks the cloudflare), the claimed behavior is occasionally used from the third party service.

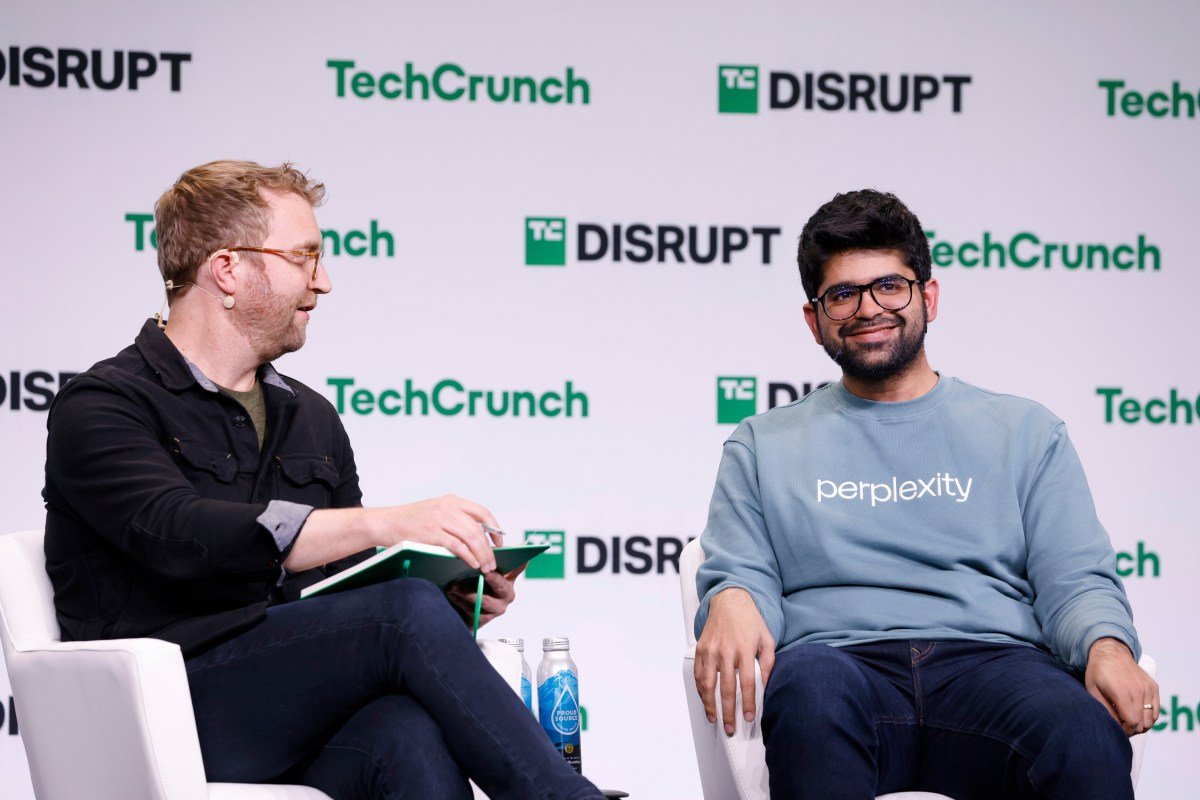

TechCrunch event

San Francisco

|

October 27-29, 2025

However, the post of confusion appealed the same as his online defenders.

“The difference between automatic crawling and user-driven bringing is not just technical-it can access the information on the open web,” said the post. “This controversy reveals that Cloudflare systems are fundamentally insufficient to distinguish between valid AI assistant and actual threats.”

The complaints of the pemptaxy are also not fair. An argument used to call Prince and Claudflare’s wonderful methods is that Opina does not treat the same way.

“Opena is an instance of a top AI company that follows these best practices they respect Robots.Text and do not try to avoid any robots.Text directive or network level blocks Prince wrote in his postThe

Web bot The Internet Engineering Task Force is a cloudflare-supported value developed by the AI agent web requests to create a cryptographic method to identify.

The controversy comes with the re -size of the Bots Activities to the Internet. Bots are seeking to scrap a lot of content for training AI models as TechCrunch said earlier Has become a deadlyEspecially on small sites.

For the first time in the history of the Internet, Bots Activities are currently outdated to human activities onlineAccording to the Bad Bot Report of Impiva, published last month, AI traffic accounting is more than 50%. Most of those activities are coming from the LLM. However, the report also showed that the contaminated bots are now 37%of all Internet traffic. This is the activity that includes everything from endless scraping to unauthorized login attempt.

Until the LLMS, the Internet usually acknowledged that websites could block and block most of the bots provided by how many times it was contaminated using captchas and other services (such as Cloudflare). Websites also had clear enthusiasm for working with specific good actors like Google, it guides what the index does not do via Robots.XT. Google has made the Internet index, which sent traffic to sites.

Now, LLMs are eating the growing amount of that traffic. Gartner predicts That is the volume of the search engine By 2026, it will be reduced by 20%. Now people tend to click on website links from LLMS that they are most valuable for the website, which they are ready to manage any transactions.

However if the man Accept agents As the technology industry has predicted – they will arrange our travels, book our dinner reservation and shop for us – will websites block the interests of their business? The debate over X is fully hesitant:

“When I give the request/task I want to visit any public content on my behalf!” Wrote One person Cloudflair calling in response to confusion.

“If the owners of the site don’t want it? They simply want you [to] Go straight home, see their things ” AnotherThe owner of the site mentioned that the contents of the site want to earn traffic and possible advertising, to not let the confusion take it.

“This is why I can’t see ‘agent browsing’ really working – people are much more trouble than thinking. Most website owners will simply block,” A third Forecast